Mirage › Introducing the Mirage

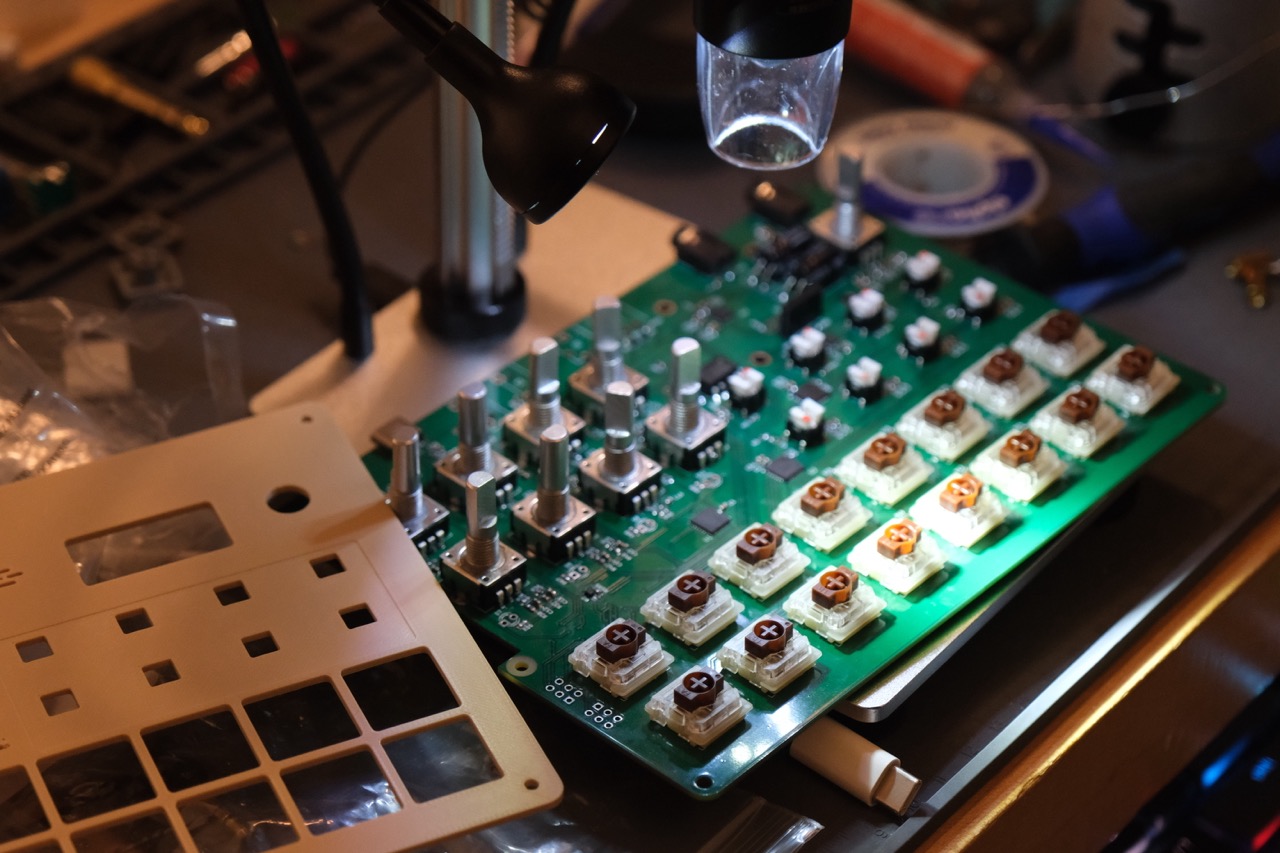

Assembling the Mirage Rev 2.

TL;DR: I’m launching the Mirage, a hardware sampler that generates new sounds in real-time using embedded generative audio models. It combines traditional sampling with voice control and audio “model bending”, giving musicians new ways to explore and create unique sounds.

Contact me or sign up as a tester to help shape Mirage’s future.

How do we make AI art less boring?

People have mixed feelings about AI. Everyone I talk to – from engineers to physicians to artists – agrees on one thing: AI changes their relationship with their labor. The response to this, however, varies quite a bit. This ambivalence is most apparent in creative fields, where AI boosters are selling the ability to generate whole books, movies, albums, and works of visual art from a few prompts. From an efficiency perspective, this is optimal. But art has never been about efficiency: it’s about history, the artist’s abilities and limits, and the artistic process. Many artists are responding to these efficiency promises with rightful animus and distrust. The artists, in fact, are not the customer. Their bosses are.

As a researcher and a musician, I’ve been exploring the intersection of AI/ML and art for several years. I’ve trained models to generate uncanny plants and insects for album art; coaxed haunting sounds of out audio models by mangling their inputs; developed poetic songwriting tools that induce hallucinations from speech recognition models. I’ve developed the take that “AI art” is definitionally boring – that is, if a black box AI model controls the entire process, the output is “AI art” and is therefore boring. It doesn’t say much about the person who made it and what their talents and limitations are as an artist, nor does it convey much about the history and situational context that shaped it. This is because many AI tools are not designed with artists in mind: they are optimizing for efficiency over human agency. This makes them effective “content generators,” but not necessarily effective artistic tools.

The more human touch that shapes a creative process, the more it takes the shape of the person who made it, and the less boring its output becomes. AI is not fundamentally incompatible with art, but the tools need to fit within a human process rather than replace it.

How do we make AI more compatible with human creativity? We do this by designing tools that provide the rudiments for people to develop their own processes. These tools should be explorable, tweakable, and most importantly breakable – the less prescriptive they are about their use, the more they can be shaped by an artist’s personal touch. Guitar amplifiers don’t protect you from distortion, nor do blank canvases demand that you paint on them in a certain way.

The piano ain’t got no wrong notes.

–– Thelonious Monk

Introducing the Mirage

The Mirage is a new breed of hardware sampler that lets musicians create new samples on-the-fly using generative audio models. It’s the first piece of musical hardware to embed generative audio models directly in the box, without any requirement for an internet connection or subscription. Users prompt the Mirage via a voice interface, powered by Moonshine.

Most importantly, the Mirage lets you deliberately break its audio model to create weird, glitchy sounds — I call this model bending (inspired by circuit bending). I’ve accomplished this tweakability – and high performance despite resource constraints – by hand-rolling embedded inference code for the MusicGen models that the Mirage uses for sample generation.

My hope is that model bending will inspire weird, uncanny, and interesting sounds that meet the moment, shaped by the touch of musicians who adapt it to their creative processes.

Features

In addition to a familiar set of hardware sampler features – a step sequencer, multiple audio channels, sample slicing, FX, CV and MIDI I/O – the Mirage incorporates three new audio generation concepts. I’ll introduce each with a short demo video.

Blank-slate generation

In an empty audio channel, simply give a short prompt for a sound you’d like to hear and the Mirage generates it:

Guided generation

The Mirage can also build off of existing sounds using your text prompt as a guide. Let’s say I have a nice pad sound, and I want a sample in a certain genre with a matching timbre:

Model bending

I developed some interesting ways to glitch the model’s audio decoder while building the Mirage’s embedded audio generation. Each of these model bending parameters has a knob attached, allowing musicians to tweak the model and generate musically inspiring outputs.

What’s next?

What I’ve shown here is the second revision of the Mirage hardware. It’s a platform I’ll continue to iterate on, with some avenues left to explore:

- User testing. The Mirage’s UX makes sense to me given workflows I’m personally used to. Now I want to understand how others use it. Does it have the right basic features? Are you able to get the sounds you want? Does it fit in your overall workflow?

- Product design. The Mirage’s electronics hardware and software are manufacturable, but the enclosure design is a 3D-printed prototype. I’d love to chat with people who have industrial design and manufacturing experience to see how – given demand – the fit and finish could be polished and moved into small-batch production.

- Partnerships. Do you work in the music hardware space? If so, please reach out. The Mirage is the first salvo in what I believe will be a rich market for hardware products with embedded audio AI.

If the Mirage has caught your interest, please contact me or sign up as a tester.

Thanks for reading!